"Google is expanding its real-time caption feature, Live Captions, from Pixel phones to anyone using a Chrome browser, as first spotted by XDA Developers. Live Captions uses machine learning to spontaneously create captions for videos or audio where none existed before, and making the web that much more accessible for anyone who’s deaf or hard of hearing.

When enabled, Live Captions automatically appear in a small, moveable box in the bottom of your browser when you’re watching or listening to a piece of content where people are talking. Words appear after a slight delay, and for fast or stuttering speech, you might spot mistakes. But in general, the feature is just as impressive as it was when it first appeared on Pixel phones in 2019. Captions will even appear with muted audio or your volume turned down, making it a way to “read” videos or podcasts without bugging others around you. And even better, Google says Live Captions works offline, too.

......

Live Captions can be enabled in the latest version of Chrome by going to Settings, then the “Advanced” section, and then “Accessibility.” (If you’re not seeing the feature, try manually updating and restarting your browser.) When you toggle them on, Chrome will quickly download some speech recognition files, and then captions should appear the next time your browser plays audio where people are talking. "

https://www.theverge.com/2021/3/17/22337074/chrome-real-time-live-captions-audio-accessibility

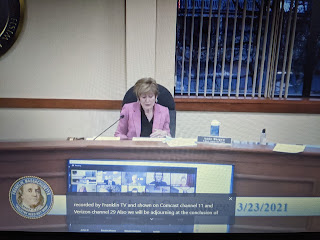

I was able to capture the screen display of the School Committee meeting Tuesday with the feature. The black box located on top of the Zoom participants displayed in front of Chairperson Anne Bergen. It does well in providing an accurate caption. It is NOT perfect. There are mistakes some humorous but most seen thus far can be made out phonetically if not spelt correctly in the caption.

One really cool and potentially useful feature is that the captioning works for audio or video EVEN if the system sound is muted.

|

| Google improves accessibility of content for those with hearing problems |